Meta’s recent overhaul of its content moderation policies represents a seismic shift with troubling implications for LGBTQI+ safety and human rights. Under the guise of championing free expression, Meta’s removal of critical safeguards threatens to amplify harmful narratives, entrench systemic bias, and enable digital violence. Tech giants prioritising profit over safety

For marginalised communities, particularly LGBTQI+ individuals, this decision intensifies existing vulnerabilities by creating a digital environment that is less safe and more hostile. Study on online gender-based violence

Digital platforms have the responsibility of aligning their content moderation and safety policies with international human rights standards, ensuring that a rights-based approach is applied when developing products and the policies governing their usage.

In prioritising free expression at the expense of adequate safeguards for all users, Meta risks creating an uneven playing field where harmful voices are amplified while marginalised voices are silenced.

Changes in Meta’s new content moderation policy and their impact

A closer look at the key policy changes reveals why this shift is dangerous:

Removal of Anti-LGBTQI+ hate speech protections

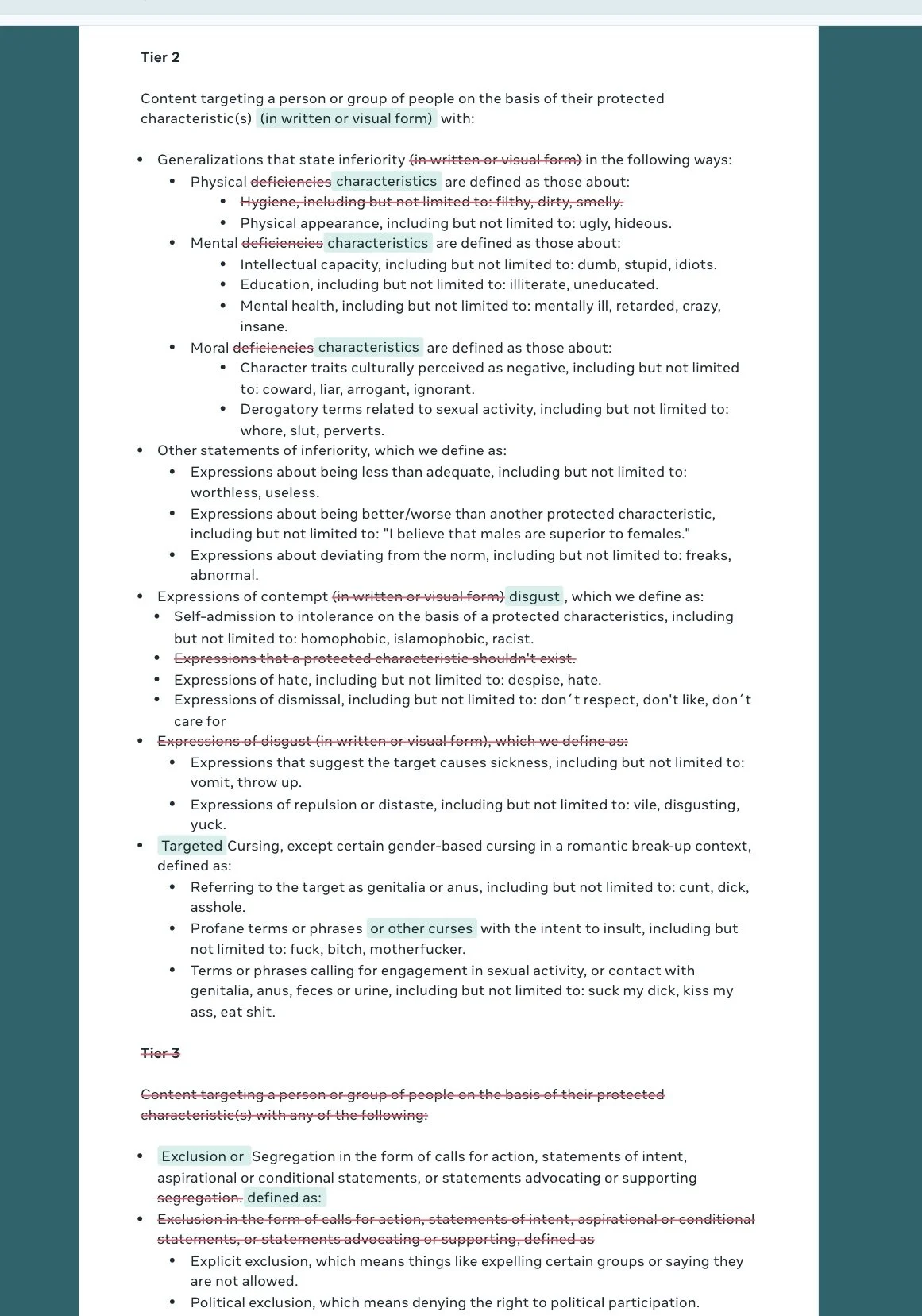

Meta’s old content policy against targeted discrimination

Under Meta’s previous policies, content that framed LGBTQI+ identity as a mental disorder, deviant behaviour, or immoral choice was classified as hate speech and removed.

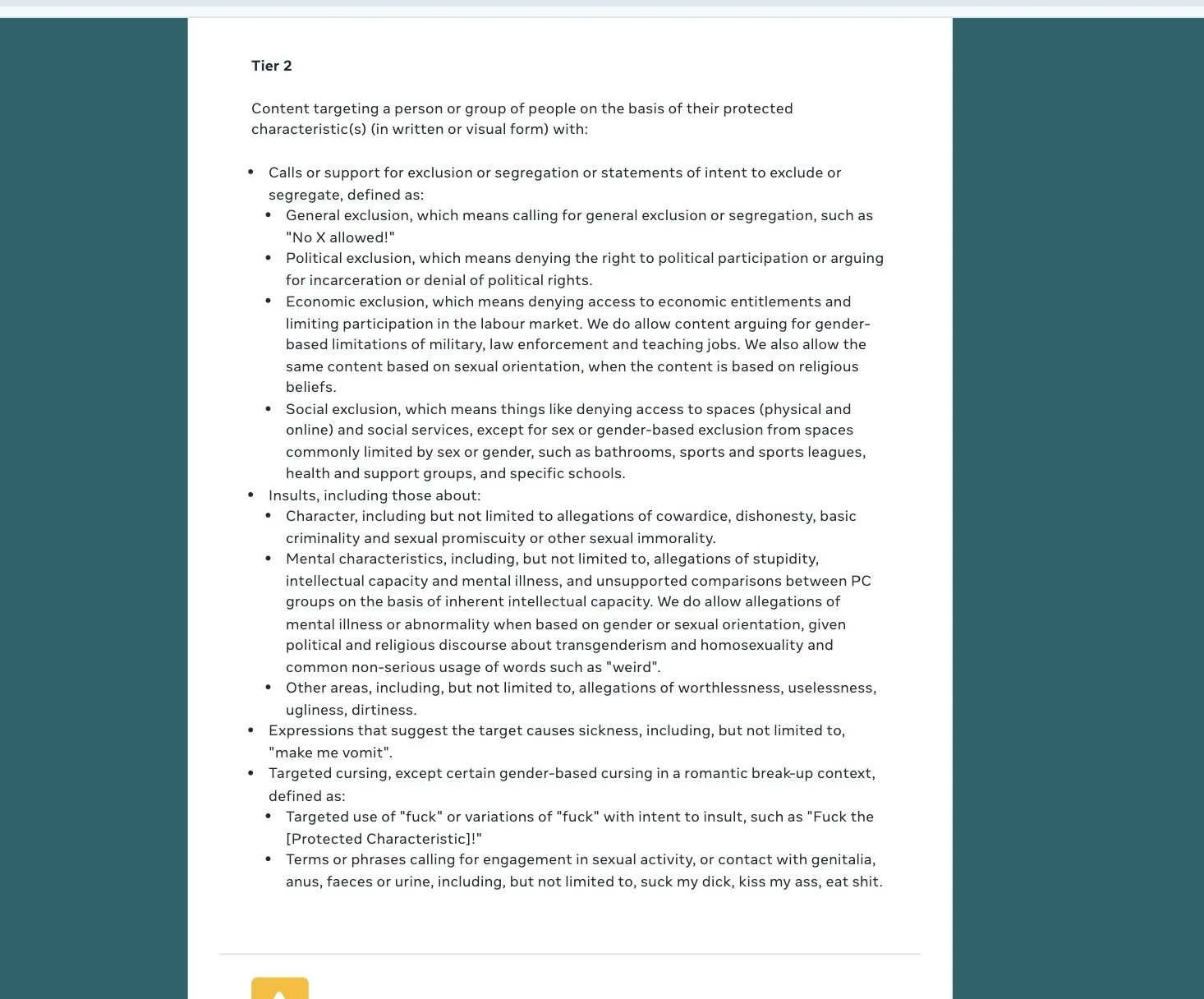

Meta’s new policy allowing “allegations of mental illness or abnormality when based on gender or sexual orientation”.

Impact: Allowing hate speech disguised as personal belief undermines the safety of LGBTQI+ individuals, particularly in regions where they are criminalised or subjected to violence. Global criminalisation map

Threat to public health and advocacy by ending partnerships with independent fact-checkers

Meta’s decision to cease third-party fact-checking removes a vital layer of accountability in combating misinformation.

Impact: In societies already plagued by misinformation, the absence of fact-checking allows harmful narratives to spread unchecked, directly threatening public health campaigns and advocacy efforts.

Risks of community-based content moderation

Meta’s reliance on a crowdsourced model for moderation shifts responsibility from trained oversight to individual sentiment.

Impact: In countries where societal prejudice against LGBTQI+ people is deeply ingrained, majority-driven moderation institutionalises bias. Study on online violence against LGBTQI+ persons

Risk of reinforcing colonial practices

Meta previously engaged marginalised communities in policy development and content moderation processes, helping identify harmful terms in Indigenous languages.

Impact: Abandoning this inclusive model signals a retreat from human rights commitments and risks turning content moderation into a tool for exclusion rather than empowerment.

Why Meta’s framing of free expression falls short

Meta positions these changes as a “necessary expansion of free expression”, but this fundamentally misunderstands the balance between freedom and protection.

When platforms fail to uphold protections, they privilege harmful voices at the expense of marginalised communities. By removing safeguards, Meta forfeits its responsibility to create a safer, more equitable internet.

The timing of these policy shifts raises concerns about corporate governance prioritising appeasement over human rights commitments.

Marginalised communities are often excluded from digital governance and decision-making processes, leading to policies that expose them to harm.

Standing alongside young LGBTQI+ persons, we maintain that:

- Digital platforms must balance free expression with accountability.

- Content governance must prioritise safety and dignity.

- Human rights impact assessments must guide policy shifts, not political or market pressure.

Meta has failed on all counts, and this rollback represents a failure of stewardship despite its global influence.

The internet we build today determines tomorrow's freedom. Digital spaces must be arenas of truth, safety, and empowerment, not breeding grounds for hate and disinformation.

We demand that Meta reconsiders this dangerous course and restore robust, rights-based protections for all users.

Authors: Kenny Owen, Marline Oluchi and Lydia Ume

Editor: Lydia Ume